TITAA #56: Interfacing with Features in Time Loops

Creating UIs - Narrative & World Knowledge - Int Fic - Using Features - Time Travel TV - Tough Books

Again no intro piece, since there are some very meaty things going on below. Three mega-topics of the past couple of weeks: UIs created by multimodal LLMs and/or toolchains, new ways of applying latent features for creative direction (text and image) with sparse autoencoders, and a ton of work on narrative/games/creativity at recent conferences. I also read some hefty books and watched a lot of TV, but I’m abbreviating those recs heavily and putting the details in the paid subscriber separate recs mailing, due to length.

In a few meta comments on building AI Things: I am happy to see people who can’t program a javascript frontend suddenly making apps with LLM help. And I guess it’s not a surprise that a solid fraction of their app attempts visible in social media threads are simple proto games. They are playing, after all.

But having programmed with LLM help for a while now, I feel confident in saying that designers and developers aren’t really going away. It would be nice if creative tech workers could meet up in the middle on friendly employment grounds. What if companies with AI-assisted creativity tools also offered a way to reach out to professionals (designers, writers, artists) to take their prototypes to the next level. What if Claude said, “You seem to be unhappy with my CSS design. I can recommend a few places to find a designer!” Or GPT4 said, “I saw that thumbs down on my Faulker impression. I can recommend some editors and writers to work with.”

Just a thought.

TOC (links on the web page):

AI Creativity Tools (UI creation, Interpretable Features, Video/Audio Quickies, Procgen & Misc)

Games News (and a load of new Narrative Research)

Recs (Books & TV, abbreviated)

AI Creativity Tools

Creating UI/Apps With AI

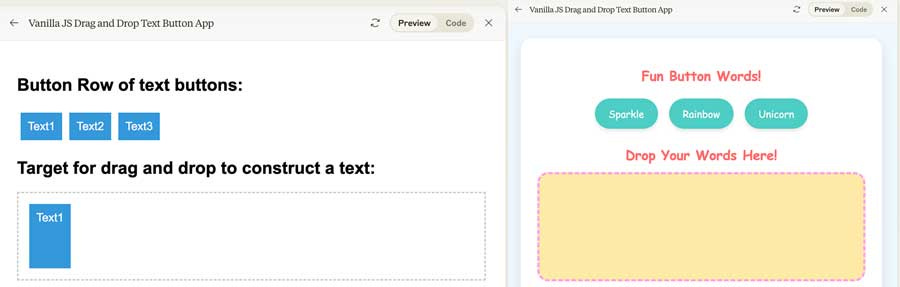

Claude 3.5 Sonnet and it’s web design “Artifacts” is proving popular. Ask it to create a web app for you from a sketch or text prompt, and it will make it in a little frame with a toggle between live preview and code. Lots of fun demos on X, including Shubham Saboo doing an educational app for a paper, and obviously many people doing—or trying— mini games.

I have had a great time with this tool, tbh. It’s a bit like being a manager of a junior but talented web dev, and it helps if you know how to direct it. I spent a couple hours going back and forth on something — I had to ask for vanilla JS after it said it didn’t have some React libs installed (it defaults to React); eventually I moved the code to cursor.sh and activated the same model in their code editor. After a few edit exchanges, I used up my free requests and whipped out the credit card. I cancelled my ChatGPT Pro subscription.

Websim, the weirdness-inspired simulation engine created by Nous Research (written about in my posts here and here), has switched to Claude 3.5 Sonnet and the list of demos is growing, even with a homepage browser. I mean, it’s basically another frame around the Artifacts, but shareable? And focused on weird? But also a lot of webgl? There’s even a “websim prompt library” page and some cool daily trending rundowns on X (like here). Lots are game-gen related. They even added an “edit” option (X link), if you right click on a page element you can ask for an updated variant.

TLDraw’s web drawing app team has been doing AI-generated UI for a while now with this code branch — make-real (local starter repo)— and their demo clips posted on X have always been awesome. Here’s one turning a screencap of a webpage into a wireframe for editing in their drawing tool. Here’s a visual programming tool based on it called Holograph that you can play with. Their recommended method is draw and annotate your UI, and then the model updates the app. (So, less text prompt and more wireframe input.) Which brings us to….

Figma just announced a bunch of greatness (that’s a Fast Co article) including simpler UI, AI enhanced design editing and interactive prototype generation. But, they are in beta with a waitlist 😢. Designers are predictably freaking out. But I’m here to confirm none of these AI models can replace custom design work for a client, even if they speed up concept prototyping. I guess we just need the bosses to realize this.

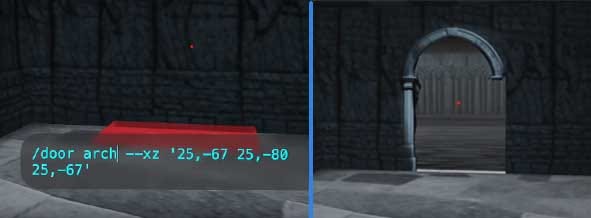

3D: EJones on X just released Latent-Clay, a local-first “AI World Sandbox” using Stable Diffusion 1.5, three.js, and a few other tools. It looks super fun. I wish Blockade Labs would do this? In particular, he has a video showing creating two gothic dungeony spaces and making a door between them. You move around the space and populate it using commands, but it’s a complete workflow environment set up for you. He has good videos.

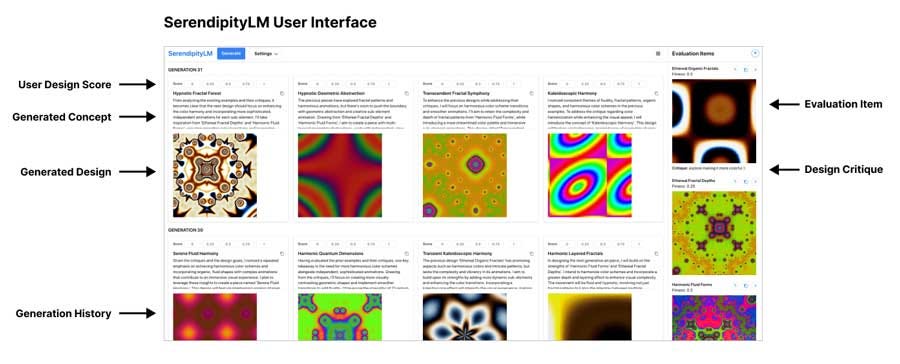

A bonus, similar goal but different tech: Samim’s cool SerendipityLM project aims to help people find cool spots in gen art— requiring an OpenAI key to run it, though. “SerendipityLM is an experiment in "Serendipity Engineering", that moves beyond traditional prompting and instead uses evolutionary interaction methods to generate complex generative artworks with Large Language Models. It turns the exploration of design spaces into a playful and serendipitous experience that investigates the boundaries LLM HCI.” This is a goal similar to some themes down in the Narrative section’s papers.

Bringing us to…

Interpretable Features for Exploring Generation Directions

Two awesome writeups and demos of Sparse Autoencoders (SAE) in one week, heading towards making better tools with interpretable generation controls: one for images and one for text. We met SAEs recently in the amazing Claude interpretation feature article and the Golden Gate Claude experiment. The gist behind the concept is to take a lot of embeddings and train another model on top of them, to try to find the interpretable features that are hiding in there. Then usually someone or somemodel “names” them for use and they can be inspected and turned up or down for effect. This is quite distinct from training a model in a style with a LoRa or Midjourney’s styles based on ratings—instead, it decomposes features in an existing model.

⭐️ Interpreting and Steering Features in Images by Gytis Duajotas is a nice piece with a demo site and shared weights to play with the features and your own images. (And here’s a simple colab for coders.) I spent a ton of time just with one image. I uploaded the image you see as “original” below (from Midjourney) to inspect the features it found for the version after embedding (top right); then I tried intensifying different features. The rows below show 2 different samples from different feature experiments. I guess the bottom feature is “creepy thing blends into the room.”

My favorite prominent feature was this unnamed #109479, which wtf and !

This is a good start of the knobs we want to be able to turn, to get the imagery we’re after! Way simpler than training something, although there will always be an artistic practice around creating and using personal trained models.

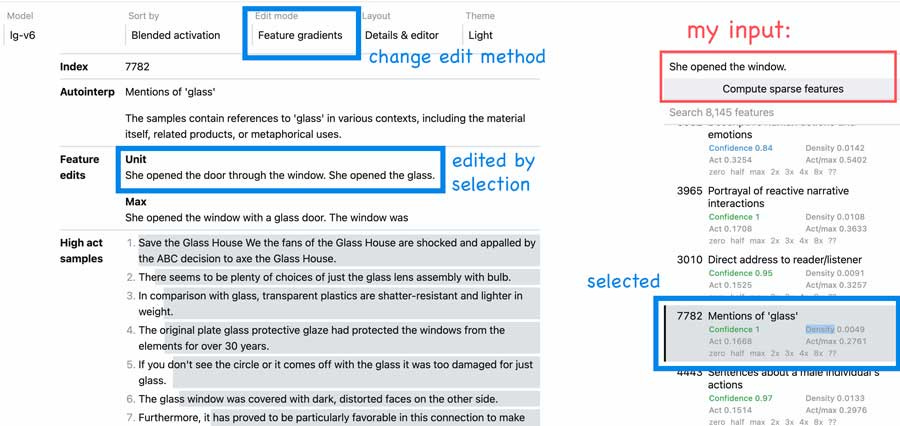

In text SAE-land, Notion researcher thesephist (Linus) posted about Prism, another generous exploration of training SAEs on datasets and then building a (slightly complicated) playground for us. Just like with the image SAE, we can “find the most strongly represented interpretable features for a given input. In other words, ask ‘What does the embedding model see in this span of text?’” Note, it may not be “right.” I can easily find weird errors. But this way lies creativity, perhaps, if not classification accuracy.

In Prism, on the right side, you can type or post some text to analyze. My sentence on the right was “She opened the window.” It thinks for a while, and gives you features it identified and confidence (based on the model you’ve selected!). Interestingly, in this example, it co-associates windows with glass and doors (fair?). It does get things wrong, depending on the size of the model you choose (this model concluded the text involved a male character). I annotated to give you some UI tips here:

You can also use the features to modify your text, for instance, as shown above — clicking on an “only” limitation feature, it changes the text to “She only opened the window.” In the shot above, it added the word “glass.” You can also combine things in “addition” mode, which is very interesting. This is not always successful or grammatical, a bigger problem compared to visual edits which are less noticeable when poor.

Linus has good UMAP diagrams of the encoding spaces in Nomic atlases, e.g., sae-lg-v6-v2. He wraps up with another post on the idea of a “synthesizer for thought,” giving us real UI to control interventions on our text or images. We definitely need apps with UI other than text prompts and temperature sliders.

(Finally, just another reminder of Amelia Wattenberger’s work on these ideas too. And related: see these slides from UIST (ui conf.) by Bjoern Hartmann on better interfaces for working with AI models and exploration.)

Video & Audio App News

There’s always lots going on with research papers on indicating direction of animation, longer generation, and multiple object interactions, etc. I still suggest subscribing to DreamingTulpa’s newsletter for the extensive research rundowns, if you haven’t. (He also covers 3D and image gen research.)

🎞 Briefly, LumaLab’s Dream Machine has added keyframe start/end video clip interpolation, which is the bomb. We all want this. They have examples on their nice long doc page. Here’s a cool “fpv drone shot” created using it and bike footage (plus After Efeects). Seems like it’s key to specify in the text prompt how you want the interp to work; I had poor results just giving two images and the queue was an hour long.

🎞 Runway’s Gen 3 is in alpha preview to selected Important People, and they are showing good results (Sora/DreamMachine-level; here’s a trippy clip on X from DreamingTulpa, and another cool clipset here, also X). Runway has more UI/UX than other tools, so this is good news.

🎧 Elevenlabs has released a free mobile app to read texts or webpages to you, using their voices (and servers). I really like this. I downloaded some epubs off Gutenberg Books and tried them. It ingests the text, and lets you follow along as it reads to you, so you can set the start point manually. It’s not going to match my favorite voice readers on Librivox, but works if I’m looking for something specific or want hands-free consumption with text visible. They have a friendly feedback button prominently displayed. More languages are coming.

🧪 Code for real-time DiT based video generation with Pyramid Attention (proj), starting to appear in some ComfyUI workflows.

🧪 Panoramic 4D Gen at 4K — a wooo cherrypick because I like VR! Animates a large static panorama shot, which would look good in my Quest 3. “4K4DGen accepts a static panoramic image at a resolution of 4096×2048. Upon user interaction through designated clicks, it animates specific regions and converts them into 4D point-based representations. This functionality enables real-time rendering of novel views and dynamic timestamps, thereby enhancing immersive virtual exploration.” But no code.

🧪 A new good-looking video interpolation method with code: MoMo.

Procgen & Misc Web

Gamsey:

Ducky Fog — a surprisingly entertaining little web puzzle game in a Codepen.

Super Moxio — Mario level 1-1 done with typewriter characters for playing in Chrome.

Threescaper, a live web page for loading your Townscaper obj files so you can play with them in the web page (with WASD etc). I’ve posted this or relatives before, but I just ran into it again and still love it. You can wander their town already loaded.

Using Controlnet to animate the Game of Life. This is pretty amazing, actually:

From this input image:

I got:

JWZ web collage (h/t Clive Thomson). Uses images randomly found on the net. Click on one for details.

Design Tools people have built with P5.js, a directory of examples.

Layered Image Vectorization codebase.

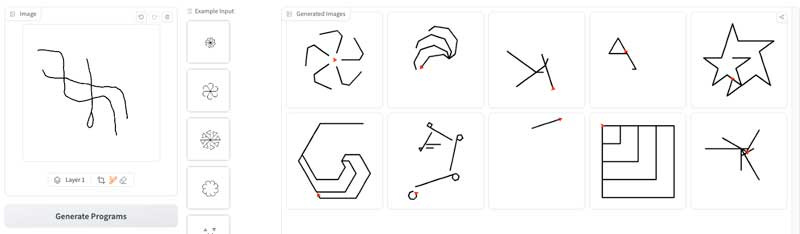

Visual Program Synthesis with LLMs (HF demo). They convert a simple vector image to ascii and use a model trained to do turtle graphics. My inputs of sigil-like sketches do terribly :) But I’m still intrigued.

Cory Doctorow continues trying to explain how copyright doesn’t protect individual artists at all, just large companies. And recent takedowns are not good news.

Games News

How Generative AI Could Reinvent What It Means to Play — in MIT Tech Review (h/t Martin Pichlmair). This is a long meaty review of the new field of AI in generative gaming, with a lot of the major players from research & companies weighing in. Recommended read. It has a bit of a focus on NPCs but covers lots of good discussion areas.

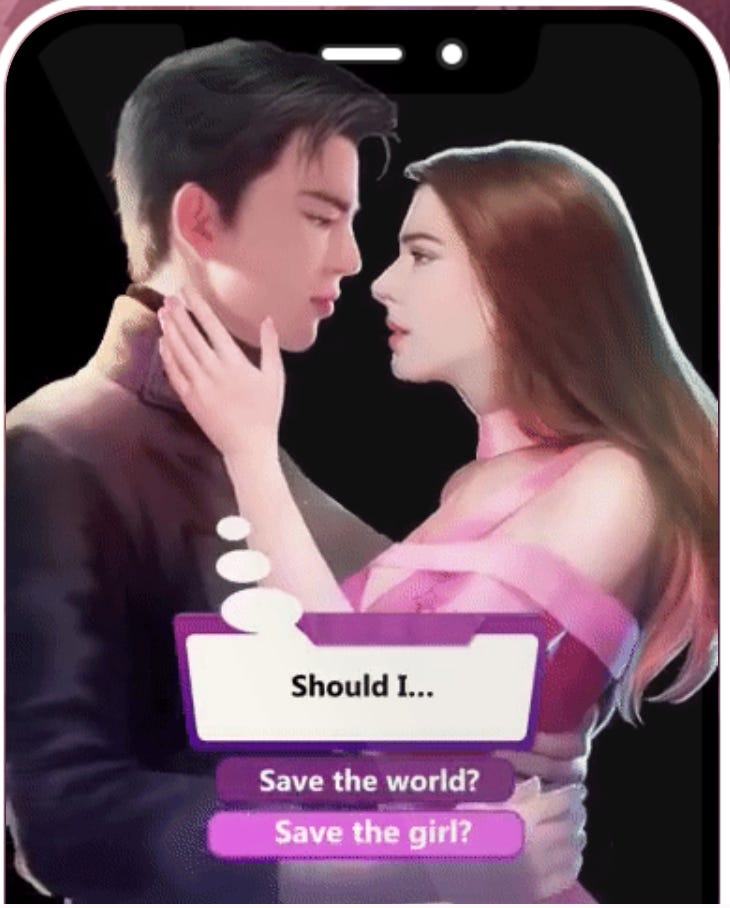

Interactive fiction has had a week! In the Guardian, a piece about making spicy mobile interactive stories—I’ve mentioned these app platforms before. Episode and Chapters are for writing and reading branching interactive visual novel type stories. They have a largely female reader/writer base. There’s a bit on Netflix’s move into mobile games in there too.

With titles like Billionaire Daddy, Pregnant by My Ex’s Dad and Once Upon a One Night Stand, the stories offered by Chapters may sound like pulp fiction. But many players come for more than just titillation. Pond said she started playing the games because she had a goal to read more. For her, the apps feel like more engaging romance novels, with all of the intrigue and plot twists of a torrid affair in which she gets to choose the ending.

⭐️ For a good take on what “interactive fiction” has meant longer term and among other gamers: ArsTechnica’s great piece From Infocom to 80 Days is a history of int fic and text games that also covers MUDs and USEnet (where I come from). Lots of friends are cited in this well-researched piece by Anna Washenko. Also, Narrascope happened last week, run by cited Andrew Plotkin and friends; I’m looking forward to the recordings. (Here are the last few years’.)

The game industry is divided on writing romance and how to do it in games (back in Feb) in Kotaku. A good overview of various debates and how to cater to players’ tastes (or not).

"‘It’s impossible to play for more than 30 minutes without feeling I’m about to die’: lawn-mowing games uncut in The Guardian" - on those worky simulation games that are popular!

“Coco’s Coding Journey With Rosebud.” An interesting blog post about working with Rosebud AI to make AI-assisted games. Most of the games I’ve tried on Rosebud are not that good (often incredibly repetitive and with wonky UI), but I’m intrigued by the teaching/learning side of the platform. It’s like people getting into coding after playing Minecraft a lot, maybe?

Ralph Koster’s announcement of Stars Reach. Koster’s book A Theory of Fun for Game Design is fantastic. I’m very into any open universe SF game, tbh. This is intense sim, too, but given the dude, I expect fun as well?

Stars Reach uses simulation to a degree never seen in an MMO before. We know the temperature, the humidity, the materials, for every cubic meter of every planet. Our water actually flows downhill and puddles. It freezes overnight or during the winter. It evaporates … [more] Stomp too hard on a rock bridge, and watch out, it might collapse under your feet. Dam up a river to irrigate your farm. Or float in space above an asteroid, and mine crystals from its depths.

Niantic Studio, in public beta and free, for developing 3D and XR game apps.

Narrative & Game Writing Research

A whole lot of papers just appeared because of conferences like ICCC24 (that’s a proceedings link - Conf on Computational Creativity), not to mention workshops. This is a top level skim — I’m splitting these up into slightly ad hoc groups like “discourse analytic,” “writing aids” etc.

Are LLMs smart about text reasoning? No:

“Can Language Models [GPT4] Serve as Text-Based World Simulators?” Tldr: no. They/it perform(s) poorly at understanding state changes from actions in text games.

LLMs also perform poorly at understanding fiction. Related to the text word simulation problem above? The new NoCha challenge (“novel challenge”) uses a hidden dataset of human-authored facts about plots in recent fiction books (i.e., not likely to be in the training data), wherein the model is given the book text and two statements about the book and asked to reply which is true and which is false. They do pretty badly, with GPT4o the best at just over 55%. Speculative fiction is especially hard for models to understand.

Building world knowledge for gen, because they’re bad at it otherwise:

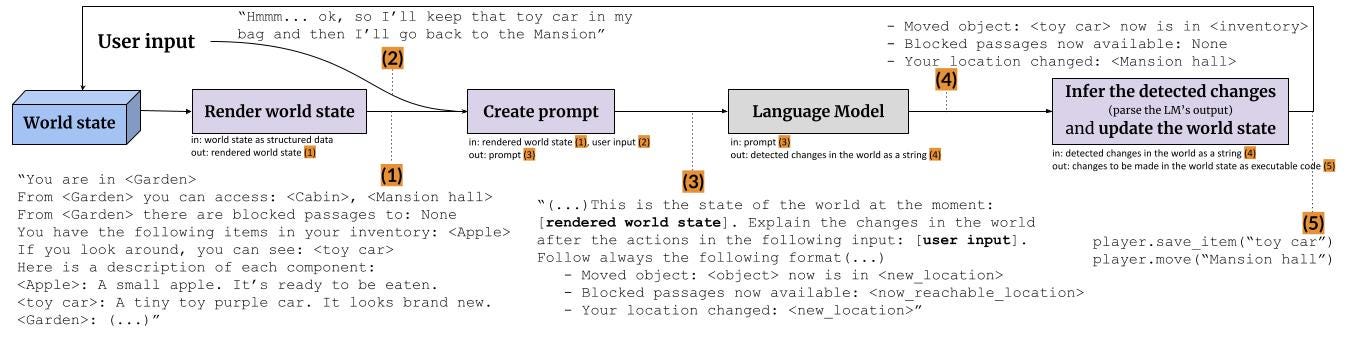

“PAYADOR: A Minimalist Approach to Grounding Language Models on Structured Data for Interactive Storytelling and Role-playing Games” in ICCC24. Also a bit in contrast to the findings above: “The PAYADOR approach to the world-update problem in Interactive Storytelling (and Role-playing Games) consists of grounding Large Language Models to structured data and use them to predict the outcomes of the player input.” It’s a world model tracker, basically, with code, for Items, Locations, and Characters.

A related project, in feel anyway: Turning “known story worlds” like a book into interactive narratives, in “StoReys: A neurosymbolic approach to human-AI co-creation of novel action-oriented narratives in known story worlds.” “We build a knowledge representation for the domain in the form of a character-centric ontology (the symbolic part), and learn that representation automatically. Then, aided by instruction-tuned Large Language Models, we infer a novel storyline over that representation with active user interaction (the neural part). Automated experiments and a human evaluation show that the narratives generated are coherent, enjoyable, and of good quality.” There’s a lot of regular info extraction and NLP in this pipeline, might be of interest to Hidden Door folks!

I’ve been saving a ton on creativity and models, here are just a few recent ones:

"Homogenization Effects of Large Language Models on Human Creative Ideation” at ICCC24, a Kreminski joint. “ChatGPT users generated a greater number of more detailed ideas, but felt less responsible for the ideas they generated.” The last half of that sentence doesn’t bother me in a brainstorm context, but the homogenization does.

“Intelligent Go Explore,” code to make a model look at creative/interesting states in exploration including text worlds. There are a number in this vein recently.

“Enhancing Human Creativity with Aptly Uncontrollable Generative AI” by Iikka Hauhio at ICCC24. “Therefore, the generative AI tools need to be aptly uncontrollable, i.e. allow some guidance from the user while still be able to sometimes force the user to go to unmapped territories of the conceptual space.” This is also why Midjourney has had control values for increasing weirdness, for instance, in its controls set.

Generative plot/story:

In Games and NLP Coling Workshop Proceedings, 2024, a bunch including “Generating Converging Narratives for Games with Large Language Models,” a paper very much inspired by interactive CYOA stories.

“Evolutionary Plot Arcs for a Series of Neurally-Generated Episodes,” at ICCC24. More discourse structure control using knowledge bits. “The present paper explores a combination of evolutionary generation of season plot arcs–based on knowledge resources for plot structures–with neural generation of episode descriptions–prompted with fragments of season plot arc.”

Discourse analytic work:

Work from an Inworld.ai person, Alexey Tikhonov, “Branching Narratives: Character Decision Points Detection.” They define “a task of identification of points within narratives where characters make decisions that may significantly influence the story’s direction. We propose a novel dataset based on [CYOA] games graphs to be used as a benchmark for such a task.” They get good results, unlike the findings in NoCha and Text World simulators above. What’s especially interesting is using their trained model to try to segment fiction afterwards, such as Alice in Wonderland. I was unconvinced but interested.

A paper creating “a model for evaluating the thematic consistency of a narrative” by the fun Rafael Pérez y Pérez. Work extending MEXICA. I don’t buy all the model feedback, but it’s interesting to consider as an editor of your own work? Might belong below with…

Writing aids:

“Novelty: Optimizing StreamingLLM for Novel Plot Generation” — another writing help tool, which turns writing into a stream of question/answer with a writing aide.

We introduce the novel approach of treating novel-writing as a streaming "question-answer" chapter-generating conversation between user and assistant. We produce perceptible improvements in model creativity by building a rich novel plot generation model through fine-tuning Vicuna-7b with StreamingLLM on chapter-by-chapter detailed plot summaries. We also demonstrate that different training data formats and prompt engineering at inference time can produce greater diversity of output and help prevent repetitive rabbit-holing.

Not research: AI startup Autobiographer asks you the questions (CNET) instead, to help you document your life. What a great idea (but not sure it needs AI for this). It does engage in conversation with you, though perhaps a bit like Eliza did (“how do you feel about that?”). Seems a bit related to the AI-therapy startups appearing now. See also, “Turning the Tables on AI,” on ways to work creatively with AI input while writing.

Get in touch if you need more refs in this area, I’m suddenly swamped in them!

NLP & Data Science

Javascript file parser for parquet!

PyData London video talks are up. Some good RAG ones (retrieval augmented generation for search + LLMs). A few solid NLP ones too, like Sofie Van Landeghem’s “How to Avoid structural biases in evaluating your Machine Learning/NLP projects.” One of the early points here is that you can’t just say you’ll do all your extraction or labeling with an LLM if you don’t have labeled data to test that against to see if it’s any good. Duh to us, but it’s not obvious to many clients/bosses. On to:

NuExtract entity extraction models. (Have not tested yet, absolutely will.)

Yet another cobbled together PDF document parsing tool — gptpdf —using PyMUpdf + gpt4 (via Luokai on Threads). It actually looks kind of reasonable and would work with Gemini 1.5 too, I think.

PAIR-code/llm-comparator: LLM Comparator is an interactive data visualization tool for evaluating and analyzing LLM responses side-by-side, developed by the PAIR team at Google.

"I Will Fucking Piledrive You If You Mention AI Again” — a well-circulated frustrated post from Ludicity. Yeppers, and I write this newsletter.

Ian Johnson’s Latent Scope will now export Data Map Plots!

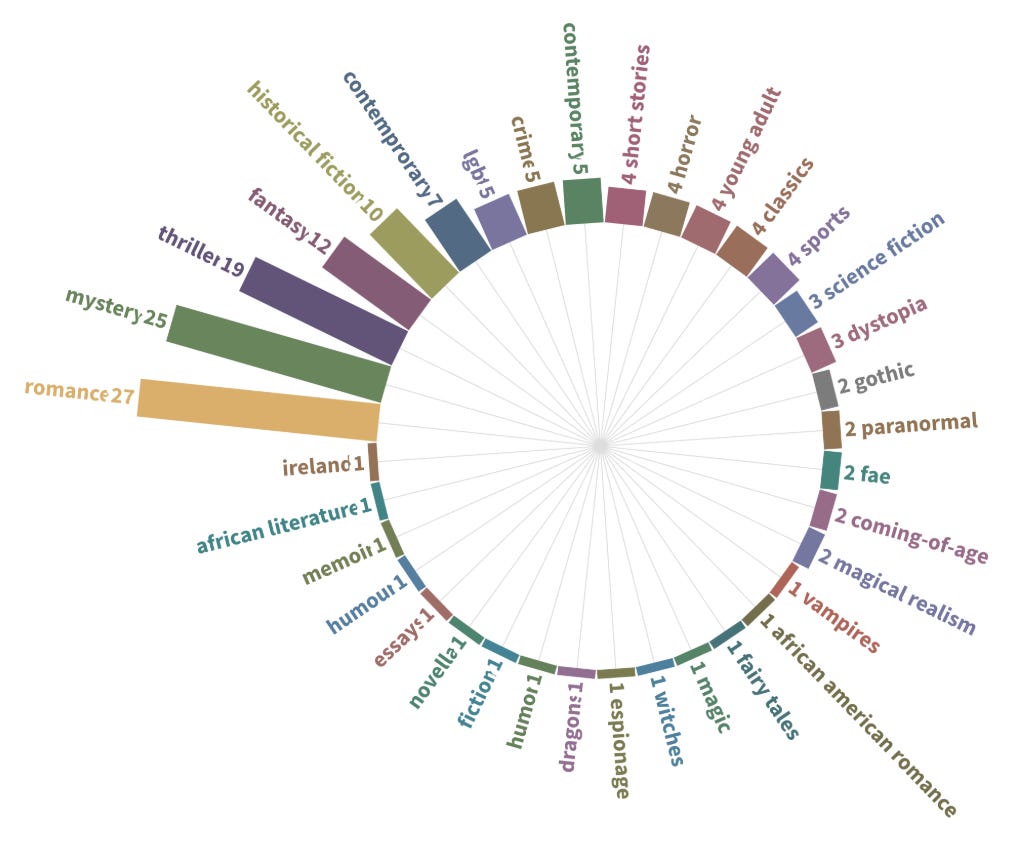

Recs

My favorite read of the month was:

⭐️ Revelator, by Daryl Gregory (fantasy/horror). My fav of the month, “southern gothic” set in 1930’s+ Tennessee, in a small mountain community who worship a creature in a cavern who speaks to them through a chosen girl. Stella, a former mouthpiece, escaped and runs a moonshine business. She returns when her matriarch grandmother dies to find out what happened to the last of the girls and the cult; she finds men with An Interest. Some reminders of Our Share of Night and Lovecraftian stuff. Well-written and I was too engaged to highlight more than one thing, evidently.

I also read Gnomon (Harkaway) and Rakesfall, the new Vajra Chandrasekera novel, but my reactions to them were mixed and complicated. I put them in the separate recs mailing to paid subs, because I had a lot of things to say.

In TV, I watched a ton, which I am briefly listing here (more detail if you get the recs mailing):

😅 The Lazarus Project s1, mid-s2 (sf, British TV). It was on some “best of” lists. Yet Another Time Travel thingy, but by episode 3 it is dark, gritty, and un-stoppably intense. The Lazarus team is a group who avert world-ending disasters (usually nuclear wars) with time loops.

🍿 The Red King (fantasy, British tv). Rather explicitly made for fans of the Wicker Man, a young rules-obsessed policewoman in disgrace is sent to a small Welsh island with a strange local cult.

🤷♀️ Outer Range, s1 and 2 (fantasy/sf, Prime). Interesting setting with a dark portal in a cattleman’s land and apparent time travel. Yes, more time.

🍿 Red Eye (thriller, British tv). Another popcorn one, set on a Chinese jet going from London to Beijing. People are being knocked off. Silly fun.

🍿 Bridgerton s3 (romance, Netflix). Wow, fully soft core. I liked, because I like Penelope and her writing career. I would watch a full season of Eloise and Benedict exploring the world, tbh. But uh 😒 Queen Charlotte I would classify as horror/romance. I read a lot about Mad King George.

My game situation is… fragmented and unfinished and involves a lot of dying in Fallout 3. Maybe more in two weeks?

A Poem: Viennese Waltz

Dresden china shepherdesses Whirl in the silver sunshine: Columbine stars Float in gauze petticoats of light… Little Columbine ghosts, wrinkled and old, Smelling of jasmine and camphor; Prim arms folded over immaculate breasts… The pirouetting tune dies… Stars and little faded faces, Waltzing, waltzing, Shoot slowing downward on tinkling music, Dusty little flowers, Sinking into oblivion… After the music, Quiet, The glacial period renewed, Monsters on earth, A mad conflagration of worlds on ardent nights… These too vanishing… Silence unending.

Egad, I need to send this so I can play some games and read. Stay cool out there, and vote correctly if you’re in France.

Best, Lynn (@arnicas on the sfka twitter, mastodon, bluesky and Threads)

We're oddly in sync. I read Revelator a few books ago (after it was itching to be read for years and somehow never got its turn.) Excellent. I'm also excited about Gregory's upcoming novel When We Were Real, teased on his website. It's about a group of people taking a road trip in a world that's known to be a simulation.

https://darylgregory.com/

And my last read was Harkaway's Titanium Noir (which was just OK.)

I wonder why you've paired Rakesfall and Gnomon. Is it because of Chandrasekera's Five Books article?

https://fivebooks.com/best-books/the-best-science-fantasy-vajra-chandrasekera/

FYI, Gen3 from RunwayML is out of closed testing and available to try, but I think only text (not image) init.